1998. The phone bill arrived at home. My mother got crazy about the amount of money she had to pay. I used a primitive 14 kbps modem that took about 5 minutes to connect, and every minute of connection was charged like a regular phone call. Chatting with people worldwide about anything in moderated spaces about specific topics was the only thing to do. Long nights and conversations, and maybe the first foundational moments of what later would be the Social Networks.

In that era, the notion of existence laid on the motto “If you are not there, you don’t exist”. It started a gold rush. This created a lot of pressure and fear of being (or not being) there, while nobody knew how to be there. It was so new and oversold, that companies over-invested in it, resulting in the famous dot-com bubble burst. Many lost tons of money, and many were fired. Sounds familiar?

Yet, this era also birthed a remarkable opportunity – to shift focus from pursuing wealth to harnessing technology’s value. Newspapers pioneered free online news platforms, while blogs and magazines flourished, granting swift access to new information and points of view. E-commerce emerged; Amazon moved from selling books to everything, and the music industry overcame the Napster crisis through technological solutions like iTunes and Spotify.

Social networks bridged connections with new and forgotten friends, driving sharing and learning. Countless startups emerged, creating disruptions in sectors such as delivery, transportation, and banking.

But somehow, a dark side emerged.

Banners, cookies, and lots of spam

Banners appeared the moment the Internet became accessible to the masses. Static or in the form of animated gifs, almost every website had one, then a few, usually not very prominent.

Sending unsolicited emails was normal, and spam became the first considerable annoying experience, and after years some countries realized that regulation was needed. Today, except for a few exceptions that haven’t been regulated yet, spam is still definitely less frequent. However, newsletter sign-ups on any site can still bring dozens of new emails every week.

Getting better at measuring how people visited pages and being more effective at serving ads to specific segments became the first big moment of change. Improved conversion rates became the new religion. From simple blogs to mass media, websites started gathering more information. First for their own analysis, later to be sold to third-party companies without our knowledge. Finally, getting data, massive amounts of it, became the goal.

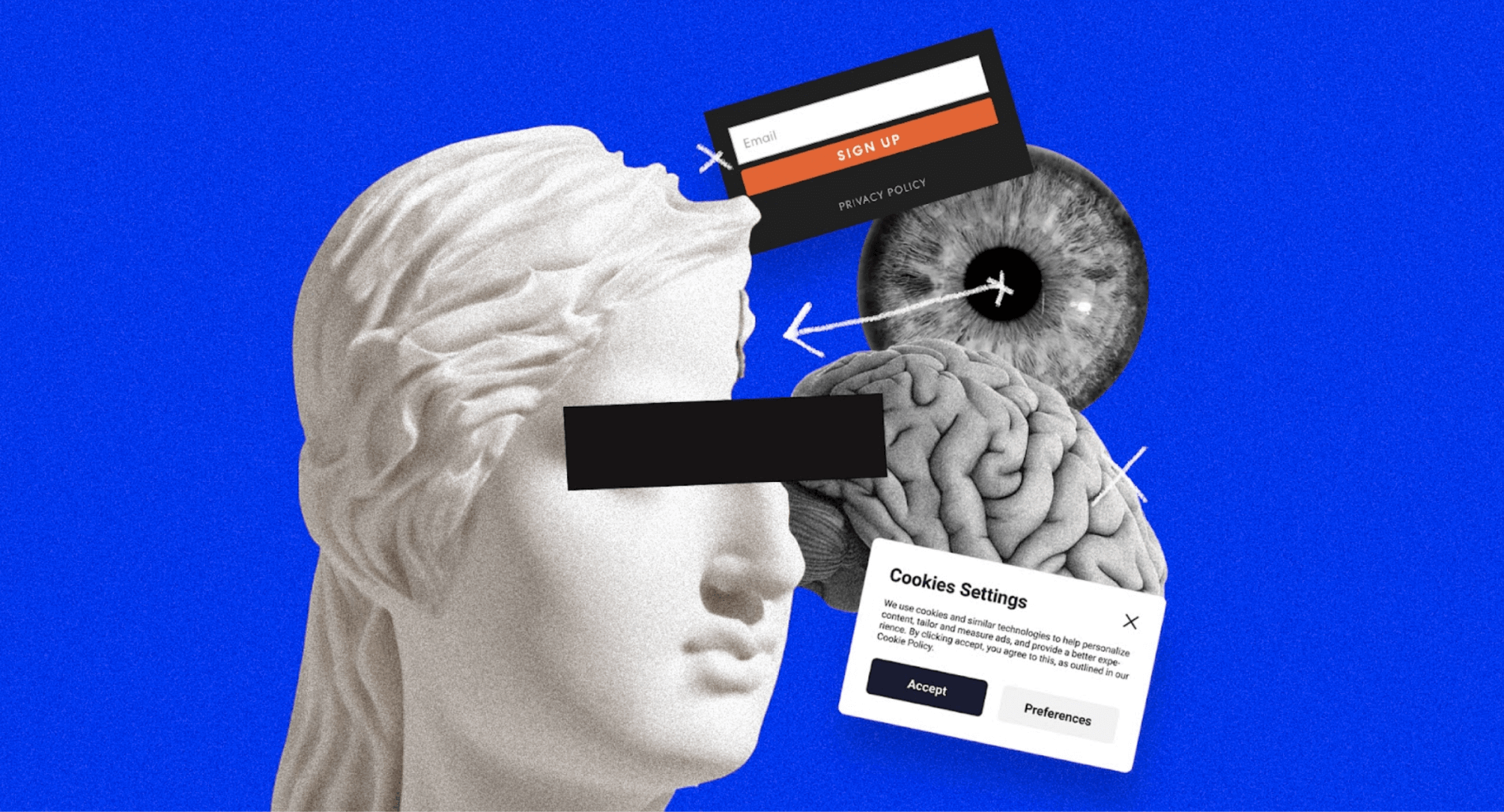

After some additional regulations were implemented, every website started to be required to ask for our permission to install hundreds of cookies to allow them to gather information and track our behaviors. It’s still like this today, to the point that it is not tracking anymore, it’s surveillance.

The digital advertising experience continued to evolve, and today the “experience” of the Internet consists of avoiding invasive newsletter sign-ups, ignoring constant subscription pop-ups, closing new windows with promotions, and avoiding terribly designed banners placed in every available white space.

A truly exhausting experience.

Today the “experience” of the Internet consists of avoiding invasive newsletter sign-ups, ignoring constant subscription pop-ups, closing new windows with promotions, and avoiding terribly designed banners placed in every available white space.

Notifications and Community Managers

Social networks grew and grew to a point nobody could expect. New users reached millions, partly thanks to the spread and use of smartphones. People expanded their network, at first with just friends and acquaintances, and later including their favorite brands and artists.

Social media companies wanted us to stay glued to our feeds as much time as possible, and they decided, especially Facebook (Meta), to bomb us with notifications to achieve that goal.

The Social Media industry was not the first one to use notifications, but it was the first to use them to generate constant traffic and growth through them. And it worked.

At the same time, brands found an opportunity to talk to their potential customers through the Internet. A new profession appeared: the Community Manager. And in some way, they successfully managed to convince us that it was ok to talk to us every day, constantly. Engagement became a new metric. Brands started to create articles, news, and other media assets and to remind us they were there. That’s how branded content was born. Today the Internet is flooded with content created by brands which only has one purpose: to sell something to you.

We now accept (or got used to the fact) that brands talk to us all the time, every minute of the day without rest, in every channel, device, app… Think about it. Do we really need it?

Algorithms and the economy of attention addiction

Brands, mass media, and politicians have begun to invest more time and money in appearing on our social feeds more often than our friends. We went from connecting with friends for fun to becoming part of a more intricate system where we are exposed to strangers’ content and even share and defend (or fight) the opinions of these people we don’t know personally.

At the same time, tech companies have designed algorithms that make us see this kind of content before our friends’ content. We are forced to see whatever was “succeeding” or trending on the Internet — a cynic way to say whatever people are engaging with the most or whoever was paying more for certain content to be bumped up . It doesn’t matter if it’s people hating each other, sharing fake news, or if it is a quiz that is mining your data and your friends. None of them matter if it’s a fake campaign to politically influence you by using the same data they gathered with that same quiz.

Algorithms focus on keeping us engaged. The more time we spend there, the more ads they’ll place in our faces, the more money companies make. What is ironic is that we feel it is ok, because these feeds create a sense of entertainment and distraction. They have become an addiction for many of us. Check your own ‘screen time’ and decide for yourself.

This new behavior caused the appearance of a new term in the tech industry; “The economy of attention,” which normalizes addiction by creating a whole industry around it.

And here we are, giving tech companies the only thing we already have: time, for free. More recently we have seen companies wanting us to pay for it in the form of subscriptions. Not only do they capitalize on time, but they also exploit the “status symbol” of our Internet presence, by asking us to get verified with a badge.

Most of the time we spend on social media is generated by recommendations based on our profiles, clicks and history. All these recommendations are offered by algorithms whose goal is to keep us engaged.

Fake news, AI, and the most critical moment for truth

Fake news is sadly part of our reality. It is intentional. The goal is to distract, lie, defame, or manipulate. A good headline lie can spread ten times faster than the fact that refutes it.

We tend to read headlines, not the articles in full. And even worse, we don’t check facts, think critically, and respect others online. The consequence is that we become more vulnerable, more polarized, and more aggressive online. We don’t hold conversations, but we have arguments online. We express ourselves in 250-character tweets.

Through generative AI, we can create an audio or video impersonating anyone. No matter how unrealistic the content spoken is, it will look authentic. It can be Donald Trump saying he loves Mexicans so much and he’s willing to destroy the wall. Deep fakes will be a part of our reality; the question is how good or bad they’ll be.

A recent example, Orange, a French telecom, tried to show us how Women’s football can be just as exciting as Men’s where you can see some footage from the best French players like Mbappe or Griezman performing great plays. Through the 2-minute-long video, comes a revelation: it’s a deep fake. The male soccer players’ faces had been superimposed onto the bodies of their female counterparts.

This video is driven by good intentions to create a needed awareness, yet a clear example of how faking reality is something that can be done today.

AI is opening a vast new field of opportunities, as it’s doing it for fraud, lies, manipulation, and global instability. Reality is today fragile; it can be manipulated easily. It will be even easier to manipulate in the next few years. And we are not prepared for that.

AI is opening a vast new field of opportunities, as it’s doing it for fraud, lies, manipulation, and global instability. Reality is today fragile; it can be manipulated easily. And we are not prepared for that.

And now what? Take responsibility

Today the Internet is noisy, exhausting, and full of effects that are damaging to us as individuals and as a collective. Doom-scrolling, addiction, reactivity, hyper-narcissism and hyper-individualism, social anxiety, lack of attention, polarization, the rise of political extremes, rigged elections, you name it…

We broke the Internet. And it is breaking us. In so many ways.

We must redefine our industry and how we put real and potential consequences at the center of our profession as designers.

Working on technology, design, and research… It’s becoming terribly challenging. It now demands a higher level of responsibility, ethics, and awareness. But, more importantly, we do need to make sure others don’t pay for the consequences of our decisions.

It will take a while to produce a needed common ethical code and a series of regulations such as the ones doctors and architects have. We need frameworks to protect and serve people’s lives first. Meanwhile, we also need to keep our own moral compass and stand for higher values, which is challenging given that most of us are hired to make more money. Making money is not a problem. But making money at the expense of others’ health is. That’s the red line.

Ethics, designing for everyone, higher moral standards, and responsibility in mind are not negotiable anymore.

We must stop making others pay for the consequences of our own acts (products). That is a significant asymmetry in the responsibility that has taken the world to the situation we’re in now.

We also need to choose better where we work (if we can choose). We should demand from the companies we work for that they align their behaviors to these standards and avoid proclaiming themselves “unicorns changing the world”. In fact, most of them don’t. They are more speculation, marketing, and show-off than an act of real value.

We must design for the people, thinking with our kids, partners, and friends and the most vulnerable people we know in mind. What if they had to use this app, site, service we are designing? Would they have to pay for consequences we don’t want?

It seems silly, yet keeping these thoughts in mind, as a framework to filter decisions, can profoundly change the way we design. Try it.

It seems silly, yet keeping these thoughts in mind, as a framework to filter decisions, can profoundly change the way we design. Try it.

In recent years, I’ve seen many voices discussing inclusion, diversity, equality, accessibility, design for people with disabilities, performing studies on human psychology, creating frameworks for circular design, user-centric design, human-centric design, life-centric design, and writing and speaking with the spirit of changing the way we understand design for the better. Our awareness is definitely growing and so is our sensibility. We designers need to realize that we have the responsibility to do things better and that we have to work on fixing things as much as (or even more than) creating new ones. In all cases, we should keep the consequences and potential risks of our own job in mind.

My posts are just the result of my own experience, life moments, and opinions. Not everything here will work for everyone. Feel free to make them yours if they work for you, and ignore them if not.

About Aitor:

In the initial phase of his career, Aitor immersed himself in branding and communication, notably within the music industry. He dedicated his efforts to helping music companies, record labels, and artists create, develop, and expand their brands for a global audience through Branding, Communication and Digital Design.

Following this transformative period, Aitor embarked on a new chapter in Latin America, working in Peru and later making Mexico his home for the past six years. He led teams and projects at prominent firms such as Interbrand and frog, making significant contributions across diverse industries, including health, industrial engineering, banking, and fintech among many others. An experience spans to multiple countries, including Spain, Peru, Mexico, and the United States.

In his current role, Aitor’s dedication extends to coaching and teaching designers and companies in various countries. His focus lies in instilling a sense of responsibility while aiding in the identification and mitigation of the challenges technology presents, ultimately striving to bring awareness and solutions to the problems technology and design may cause.